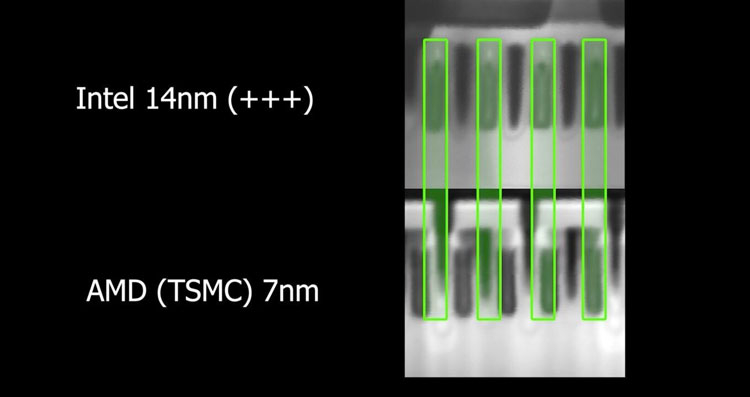

Lee points out that the cadence laws held for much longer than Dennard or Moore anticipated. It would be like having airplanes that got two times quicker every iteration.” “And all of this happens from generation to generation at an unprecedented pace. “So, riding on the coattails of smaller transistors that allowed you to put more of them on a single chip, we got faster, more energy-efficient, and capable computation and memory,” Lee says. “Dennard argued that operating voltages should scale down with transistor dimensions, allowing chips to shrink both in size and power consumption. “A chip designer from IBM, Robert Dennard, proposed a set of guidelines to make transistors smaller,” Lee says. Lee notes that a phenomenon called Dennard scaling played a major role in shaping chip evolution. This observation has held true for several decades and has been a driving force behind the rapid advancement of computer technology. Moore argued that component integration reduces costs, and, over time, the most cost-effective integrated circuits are those that integrated more and more components. Moore’s original observation was an economic and empirical one, Lee says. “This came to be known as Moore’s law, which has set the cadence for modern advances in computing from 1965 into the mid-2000s.” “Moore was a Fairchild co-founder who went on to co-found Intel and famously observed that the number of circuit components you could put on a chip would double at a fixed rate, around every 18 to 24 months,” Lee says. One figure who came to prominence in this space was Gordon Moore. “It’s an excellent semiconductor material,” Lee says, “because it has the ability to partially conduct electrical current, which makes it great for working with electrical components such as transistors, the on-off switches that relay information as ones or zeroes and form the basis of computing.”īy the early 1960s, engineers at technology company Fairchild Semiconductor were trying to figure out ways to increase the capabilities of computers by adding more and more transistors to the semiconductor chips that processed and stored their information. The story of chips begins, Lee says, in Silicon Valley in the late 1950s, when engineers began exploring a special characteristic of silicon.

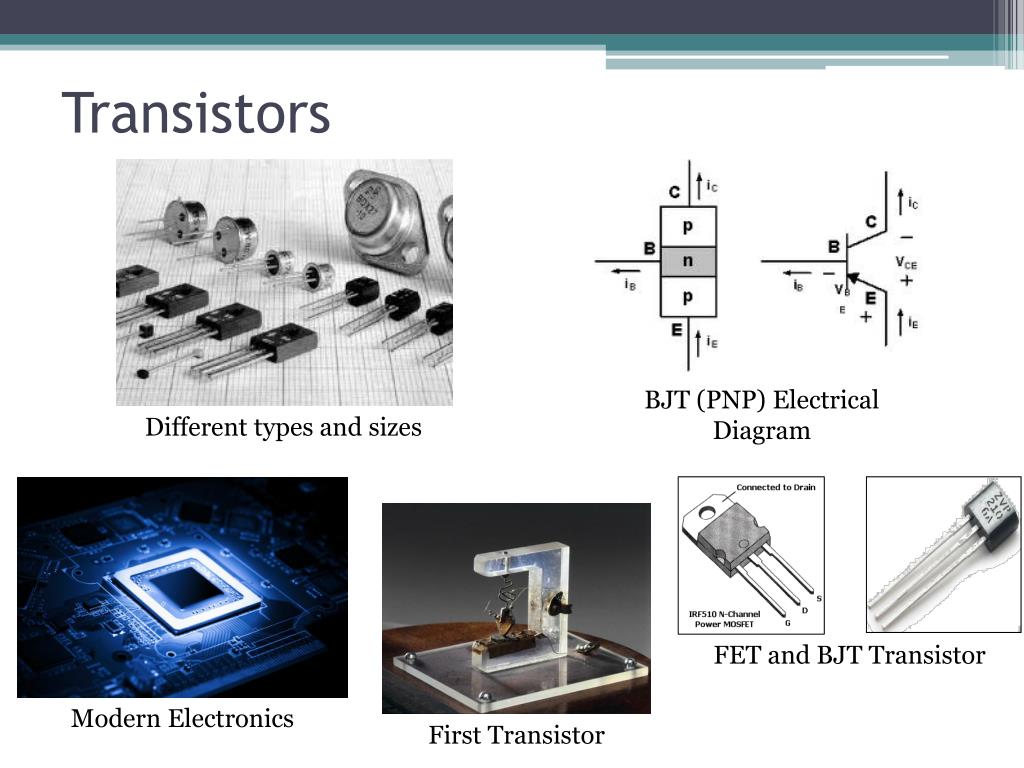

Penn Today spoke with the School of Engineering and Applied Science’s Ben Lee to discuss the rapid evolution of computers and to reflect on the seminal work of Moore, who died in March. At the heart of the evolution of these tiny but increasingly powerful technologies lies a principle known as Moore’s law, named for Gordon Moore, an engineer who co-founded Intel Corporation. Though chips are seemingly ubiquitous, they are deceptively complex. From our phones and fridges to fighter jets, these small, flat pieces of semiconductor material play crucial roles in facilitating data processing and storage. Today, MOSFETs are essential components in modern electronic devices such as computers, smartphones, and power electronics.įollowing the global supply chain collapse brought on by the COVID-19 pandemic, it became obvious that many facets of modern life are surreptitiously ruled by a tiny piece of technology known as the semiconductor chip.

The Metal-Oxide-Semiconductor Field-Effect Transistor (MOSFET) became the dominant type due to its smaller size, faster speed, and greater energy efficiency. They were initially made of germanium and later silicon, leading to the development of integrated circuits containing millions to billions of transistors on a single chip. (Read more: Science, “ Chip Makers Admit Transistors Are About to Stop Shrinking,” “ Moore’s Law Is Dead.Transistors replaced vacuum tubes in the mid-20th century. Or, just maybe, they might shrink down using the Berkeley Lab breakthrough to achieve the same ends. Chips will increasingly use multiple layers of circuitry to increase transistor density, for example. Less specialized processors will likely change shape to increase processing power. And the Japanese telecom and Internet company SoftBank recently acquired British chip designer ARM for its incredibly successful low-power chips, which will provide processing power for the growing crop of Internet-of-things hardware. /GettyImages-678914485-58e5bd2e5f9b58ef7e205a09.jpg)

Microsoft and Intel are both working on using reconfigurable chips known as FPGAs to run artificial-intelligence algorithms more efficiently, for instance. More efficient chip designs will also help increase computational speed at lower rates of power consumption. Nvidia, meanwhile, is selling specialized AI chips to an industry eager to capitalize on machine learning. To that end, Intel recently bought Movidius, a company that makes chips dedicated to computer vision tasks. We’re already seeing the processor industry fracture, with a movement away from super-fast all-rounder hardware and toward more specialized chips.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed